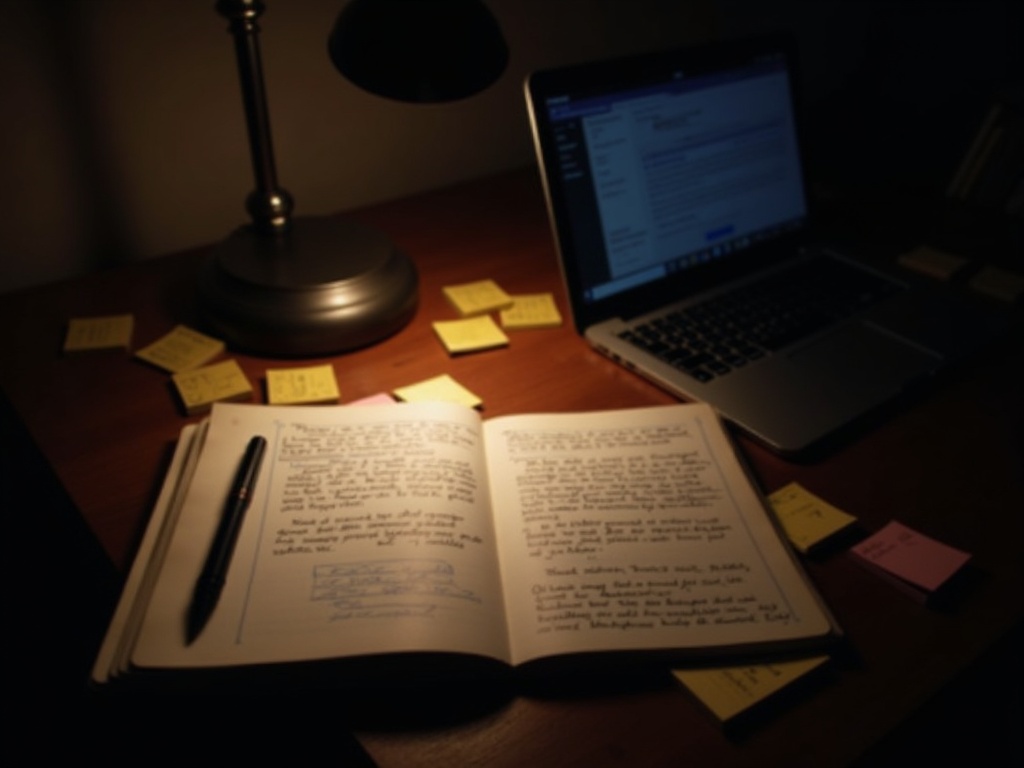

Claude + Obsidian + NotebookLM: My AI Research Stack

Every developer I know has the same problem: information is everywhere and nowhere. Your notes are in one app, your code is in another, your research is spread across 47 browser tabs, and the brilliant insight you had last Tuesday is buried in a Slack thread you'll never find again.

I spent years trying different tools to fix this. Notion, Evernote, Roam, Apple Notes, plain text files in random directories. None of them solved the core issue: I need to research something, understand it deeply, turn it into code, and then document what I built — and I need those steps to actually connect to each other instead of being isolated activities in isolated apps.

What finally worked was combining three tools that each handle one part of that loop: Obsidian for knowledge, NotebookLM for research synthesis, and Claude Code for execution. They aren't made to work together, but they do, because they all operate on the same foundation: plain text and files on disk.

The Problem: Context Fragmentation

Before I describe the solution, let me be specific about what wasn't working.

I'd be researching a new API for a client project. I'd read the documentation in my browser, maybe watch a YouTube tutorial, download a PDF with architecture examples. I'd make notes in whatever app was open. Then I'd switch to my editor and start coding. Two hours later, I need to check something in the docs but I've lost the tab. The note I made about rate limits is in a different app from the note about authentication. And three weeks later when a colleague asks how the integration works, I have to re-derive everything from memory because my notes are scattered across four platforms.

The technical term for this is "context switching tax," but the practical term is "wasting half my day looking for things I already figured out."

What I needed was:

- A single place for all knowledge that I actually own (not a cloud service's database)

- A way to synthesize complex sources quickly without reading 50-page PDFs cover to cover

- An execution tool that can read my notes and act on them directly

- Everything connected, so research flows into notes, notes flow into code, and code flows into documentation

Obsidian: The Knowledge Base

Obsidian became the foundation because of three architectural decisions it makes differently from everything else.

Markdown-first. Every note is a plain .md file on your filesystem. Not a proprietary database. Not a JSON blob stored in someone else's cloud. Files that any text editor can open, any tool can process, and any version control system can track. This matters because it means my knowledge base isn't locked into Obsidian. If the company disappears tomorrow, every note I've ever written is still right there on my hard drive in a universal format.

Local-first. Nothing leaves my machine unless I explicitly push it. For client projects with sensitive code, architecture diagrams, and API credentials in my notes, this isn't optional — it's a requirement. Cloud-based note tools send everything to their servers, and their privacy policies change at their convenience.

Git-synced. This is the multiplier. My entire Obsidian vault is a Git repository. Every edit is tracked. I can see exactly what I added to my notes about the Stripe API on February 14th. I can diff my understanding of a system between last month and today. I sync between my MacBook and my Fedora laptop via Git push/pull to my home NAS. No Dropbox, no iCloud, no third-party sync service.

The bi-directional linking is what makes Obsidian genuinely useful for knowledge management rather than just note storage. When I create a note about a specific API, I link it to the project note, the deployment note, and the general concepts note about authentication patterns. Over time, these connections form a web that mirrors how the knowledge actually relates. Finding something three months later takes seconds, not the 20-minute scavenger hunt I used to endure.

Claude Code: The Execution Engine

Obsidian stores and structures knowledge. But knowledge sitting in a vault doesn't build anything. I need something that can read those notes and turn them into working code, deployed to a server, with tests passing. That's Claude Code.

This is where most people's mental model breaks. They think of AI assistants as chatbots — you type a question, it gives an answer. Claude Code is fundamentally different. It's a CLI tool that can read files on your disk, write files, run shell commands, manage Git repositories, and orchestrate multi-step workflows. It's not a chat window; it's an agent with access to your development environment.

In practice, this means I can say: "Read my Obsidian note at ~/vault/projects/payment-gateway.md and scaffold a Python client based on the API endpoints I documented there." Claude Code opens the file, reads the content, understands the structure, and writes working code that matches what I documented. No copy-pasting between apps. No re-explaining context. The note is the context.

Beyond reading notes, Claude Code is connected to five MCP servers that extend its capabilities — Gemini for research, Groq for fast generation, local models via Ollama, and more. I've written about this in detail in my post about AI bot design patterns. The MCP integration means Claude Code isn't limited to its own model's knowledge. It can call out to other AI systems when it needs specialized capabilities.

The combination of file access + command execution + MCP tools means Claude Code operates as a genuine development partner, not a suggestion box. It reads the code, understands the project, makes changes, runs tests, commits to Git, and deploys — all within a single conversation.

NotebookLM: Source Synthesis

The third piece handles the input side of the loop — consuming and understanding new information quickly.

NotebookLM is Google's research tool that ingests documents and lets you interact with them conversationally. You feed it PDFs, web pages, YouTube transcripts, and it creates a queryable knowledge base from those sources. The key difference from a general chatbot: it only answers based on the sources you provided. No hallucination. No blending in random training data. If the answer isn't in your sources, it says so.

The killer feature for my workflow is the audio briefing. I upload a 40-page API specification, a few blog posts about integration patterns, and a YouTube video from the API provider's developer conference. NotebookLM synthesizes all of that into a 15-minute audio overview that I can listen to while walking or making coffee. By the time I sit down to code, I've already internalized the key concepts, the gotchas, and the recommended patterns — without having to read every word of every source.

For structured research, I ask NotebookLM specific questions: "What are the rate limits for the batch endpoint?" "What authentication method does the webhook use?" "What's the difference between their sandbox and production environments?" It pulls the answers directly from the source documents with citations. I take those answers and paste them into my Obsidian notes, where they become part of the permanent knowledge base.

How They Work Together: The Loop

Here's the actual workflow, step by step:

- Research (NotebookLM). Upload all relevant sources — docs, papers, videos, articles. Generate audio briefings for high-level understanding. Ask specific questions for technical details. Extract the key information.

- Structure (Obsidian). Create notes from the research findings. Link them to existing project notes and concept notes. Build a structured understanding with sections for endpoints, auth, data models, edge cases, and deployment requirements.

- Execute (Claude Code). Point Claude Code at the Obsidian notes and the project codebase. It reads both, understands the context, and generates working code. It runs tests, handles Git operations, and can deploy to staging.

- Document (Obsidian + Claude Code). After implementation, update the Obsidian notes with what was actually built (vs. what was planned). Claude Code can draft documentation updates based on the code changes it made.

The loop is continuous. Research feeds knowledge, knowledge feeds code, code feeds documentation, and documentation feeds the next round of research when the project evolves.

The three tools work because they share a common substrate: files on disk. Obsidian writes markdown files. Claude Code reads and writes files. NotebookLM exports to text. No APIs connecting them. No integrations to maintain. Just files.

Practical Example: Integrating a New Payment API

Last month I needed to integrate a payment gateway for a client project. Here's exactly how the workflow played out.

Day 1 morning: Research. I uploaded the payment provider's API docs (3 PDFs, about 80 pages total), their developer blog posts about best practices, and two YouTube videos from their developer conference. NotebookLM generated a 12-minute audio overview. I listened to it during my morning walk. Key insights: their idempotency model is different from Stripe's, their webhook verification uses HMAC-SHA256 (not SHA256 alone), and their sandbox has intentional failure modes for testing error handling.

Day 1 afternoon: Notes. I created ~/vault/projects/client-x/payment-integration.md in Obsidian. Sections: Authentication, Endpoints (charge/refund/webhook), Data Models, Error Handling, Idempotency Notes, Testing Strategy. I linked this to my existing notes on payment processing patterns and the project's architecture overview. Total note: about 800 words of structured, referenced information.

Day 2: Code. I opened Claude Code and said: "Read ~/vault/projects/client-x/payment-integration.md and the existing codebase at ~/projects/client-x/. Create a payment gateway client in src/payments/ with the methods and error handling documented in the notes." Claude Code read both, scaffolded the client class, implemented the HMAC webhook verification (getting the algorithm right because it was in my notes), and wrote unit tests with mock responses. It committed everything to a feature branch.

Day 2 afternoon: Document. I updated the Obsidian note with implementation details: which design patterns I used, a note about a quirk in their date formatting, and a link to the Git commit. Next time I — or anyone on the team — needs to understand this integration, the full context is in one place.

Total time from "I've never used this API" to "tested, committed code with documentation": about 8 hours across two days. Without this workflow, based on past experience, the same work would have taken 3-4 days.

If you're building similar automation workflows for your team, the same principles apply at any scale. The tools are free or low-cost, the workflow is repeatable, and the knowledge compounds over time.

The value isn't in any single tool. It's in the loop: research feeds notes, notes feed code, code feeds documentation. Each step makes the next one faster. After a year of using this stack, my Obsidian vault is a searchable encyclopedia of everything I've learned and built, and Claude Code can draw on all of it when building new things. The compounding effect is real. If you want help building an AI research workflow for your team, reach out.